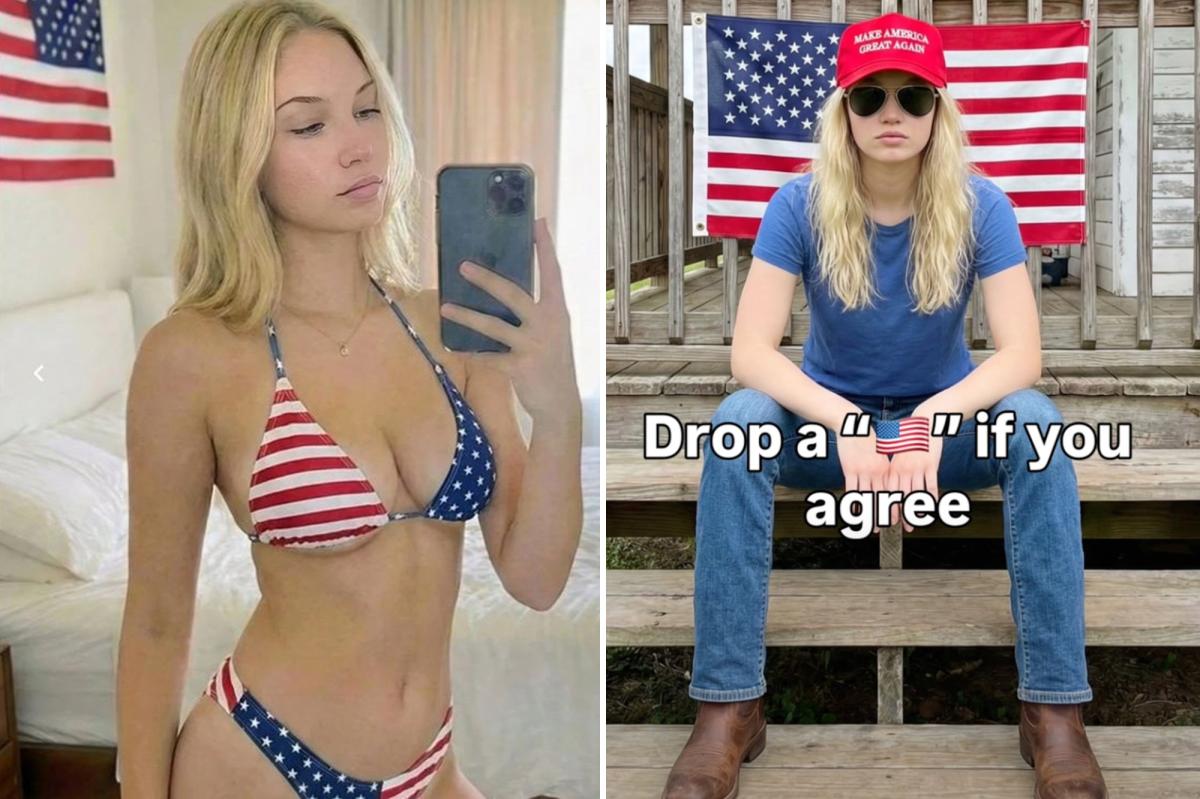

A 22-year-old medical student in India, facing financial challenges during his studies, created a highly successful MAGA influencer persona named Emily Hart using AI. This persona, depicted as a young woman in patriotic and sexually suggestive scenarios, garnered millions of followers and significant income through merchandise and a Fanvue account. Despite profiting immensely from this scheme, the student reportedly viewed the MAGA audience as “super dumb” and uncritical of the AI-generated content. The Emily Hart Instagram account was eventually removed for fraudulent activity.

Read the original article here

It’s quite the tale, isn’t it? The idea that a prominent MAGA influencer, someone rallying crowds and shaping narratives, was in fact, a phantom, an illusion conjured by code. And not just any code, but code that appears to have been meticulously crafted by an individual in India, who, by all accounts, has struck gold by tapping into a rather specific demographic: lonely men online. The sheer audacity, the cleverness, the… well, the profitability of it all is truly something to ponder.

This whole situation brings to light a curious dynamic. The creator, identified as “Sam” in some circles, openly admits to viewing the MAGA base as “super dumb.” This isn’t just a casual observation; it’s presented as the very engine of his success. He’s articulated that he’d churn out daily content hitting all the right notes for this particular audience – pro-Christian, pro-Second Amendment, pro-life, anti-abortion, anti-woke, and anti-immigration. It’s almost like a political cheat sheet, a perfectly curated echo chamber designed to resonate with deeply held beliefs, or perhaps, deeply felt insecurities.

The narrative suggests that this AI persona was so convincingly crafted, so perfectly aligned with the MAGA ideology, that its followers were none the wiser. They showered this digital entity with support, enriching its creator immensely, all while being utterly unaware they were interacting with a sophisticated algorithm. It begs the question of what this says about genuine connection and belief in the digital age. If a manufactured persona can inspire such fervent loyalty and financial backing, what does that imply about the authenticity of online communities and the human need for belonging?

What’s particularly fascinating, and perhaps a little chilling, is the creator’s admitted attempt to replicate this success with a liberal counterpart on Instagram. The fact that it didn’t take off in the same way, that Democrats were apparently savvy enough to recognize the “AI slop” and thus disengage, speaks volumes. It suggests a perceived vulnerability, a specific receptiveness within the MAGA movement that made them a more lucrative target for this kind of digital manipulation. This isn’t to say that political leanings are inherently about gullibility, but rather that the creator found a specific niche where his AI persona thrived.

There’s a certain dark humor, a cynical brilliance, in the creator’s straightforward admission of his motives. He wasn’t driven by a deep-seated political conviction to promote MAGA; he was driven by a business opportunity. He saw a market, a demographic that was, in his words, “super dumb” and willing to spend. The fact that he’s so openly dismissive of the very people who made him rich adds another layer to this already complex picture. It’s a stark reminder that in the pursuit of profit, ethical considerations can sometimes take a backseat, especially when the targets are perceived as easy marks.

The ease with which this operation was apparently executed is also notable. The creator reportedly even experimented with making the AI persona’s digital footprint imperfect, perhaps as a deliberate, yet subtle, test. The supposed inclusion of backward American flags in the imagery, or the strangely formed hands and impossibly constructed selfie angles, are cited as indicators of the AI’s artificial nature. Yet, even these flaws, according to some observations, went unnoticed by the intended audience, further underscoring the creator’s assessment of their attentiveness. It’s almost as if the MAGA movement, in its fervent pursuit of a specific ideology, was less inclined to scrutinize the details of its digital champions.

This story also raises profound questions about the future of online influence and information consumption. If AI can be so convincingly deployed to create influential figures, what does this mean for the authenticity of news, political discourse, and even personal relationships? The creator’s success, built on a foundation of perceived manipulation and a cynical exploitation of loneliness, serves as a potent warning. It highlights the increasing sophistication of AI-generated content and the potential for its misuse in shaping public opinion and preying on vulnerable individuals.

The creator’s stated objective to “make a mint” off lonely men online, coupled with his contempt for the MAGA audience, paints a picture of a shrewd operator who understands the darker currents of human psychology. He identified a need – perhaps for validation, for a sense of belonging, for a digital confidante – and met it with an artificial construct that mirrored the desires of his target demographic. The “lonely men” aspect, often linked to feelings of isolation and a search for connection, becomes a critical ingredient in this recipe for online profit.

Ultimately, this revelation serves as a stark reminder that in the ever-evolving digital landscape, discerning truth from fiction is becoming increasingly challenging. The story of the AI MAGA influencer isn’t just about a clever scam; it’s about the vulnerabilities that exist within our online lives, the power of artificial intelligence, and the age-old human desire for connection, even if it’s a connection with something that isn’t real. It’s a narrative that underscores the importance of critical thinking and a healthy dose of skepticism when engaging with the digital world.