Following a US and Israeli announcement of a “major combat operation” against Iran, X became inundated with disinformation. Numerous posts, many from verified accounts, shared misleading claims about the attack’s scale and locations, often using old or AI-generated footage. This rapid spread of false narratives highlights X’s ongoing struggle with disinformation during significant global events, with corrections proving insufficient against viral, inaccurate content.

Read the original article here

It appears that X, formerly known as Twitter, is currently navigating a treacherous landscape of misinformation, particularly in the wake of reports detailing alleged attacks by the United States and Israel on Iran. This deluge of false narratives isn’t entirely new to the platform; in fact, some observations suggest that X has been grappling with disinformation for quite some time. The very design and recent algorithmic shifts on X seem to be contributing factors, intentionally prioritizing content that leans towards the far-right and facilitating the spread of inaccuracies.

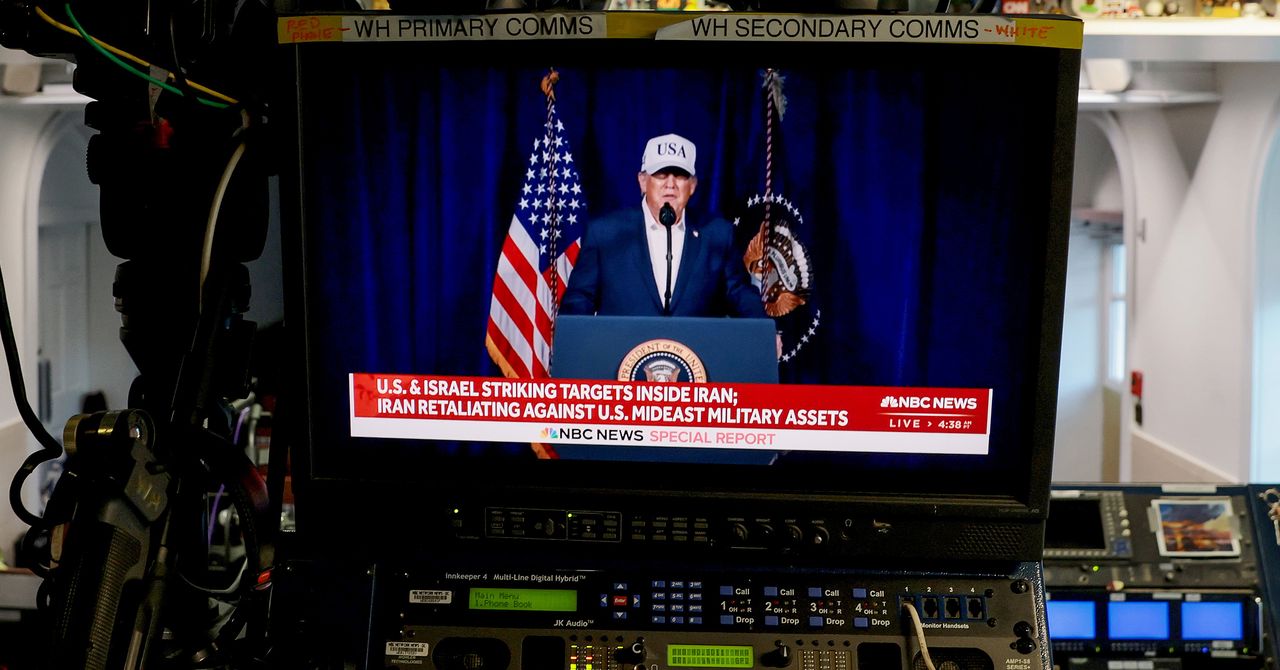

The situation is particularly concerning when one considers the recent alleged attacks. Reports indicate that in the immediate aftermath of Donald Trump’s announcement of a “major combat operation” against Iran, X was swiftly flooded with misleading claims. These posts, some of which have garnered millions of views, often misrepresent the locations and the true scale of the operations. It’s a stark illustration of how the platform can become a breeding ground for confusion and deception during critical global events.

Digging deeper into the content being circulated, it becomes clear that a significant portion of it is not new or factual. We’re seeing old video footage being presented as recent evidence of the attacks, creating a distorted reality for users. Some posts have been found to misattribute attack footage to incorrect geographical locations, further muddying the waters. The use of altered images or content generated by artificial intelligence is also a prominent feature, alongside video game footage being passed off as actual conflict scenes. This deliberate manipulation makes it incredibly difficult for users to discern what is real and what is fabricated.

There’s a prevailing sentiment that Elon Musk’s acquisition of Twitter was driven by a desire to transform it into a platform for his own right-wing propaganda, a sentiment echoed by observations about other media entities. This perspective suggests that the current state of X is not an accident but a calculated outcome of its ownership and management. The focus on promoting specific ideological viewpoints and intentionally amplifying certain types of content appears to be a core strategy.

The financial incentives for spreading information, or misinformation, on X are also noted. Reports suggest significant spending on advertising campaigns, with one instance highlighting a nation spending nearly a million dollars monthly on Twitter ads. This points to a deliberate effort by various actors to influence the discourse and shape public perception through the platform. The effectiveness of such strategies is amplified by the platform’s algorithmic design, which appears to favor engagement, even if that engagement stems from sensationalized or misleading content.

The sheer volume of fabricated content is described as staggering. Some individuals recall a time when the internet was envisioned as an “Information Superhighway,” but now it seems to run parallel to a “Disinformation Superhighway.” This shift is not unique to X; other social media platforms like Reddit and Facebook are also reportedly drowning in disinformation. The experience of users trying to stay informed during events like the unrest in Mexico highlights the pervasive nature of this problem across different platforms.

The deliberate architectural choices made on X are frequently cited as the root cause of this problem. Specific mentions are made of how Elon Musk allegedly rewrote the platform’s algorithms to deliberately amplify disinformation campaigns and ensure a constant stream of far-right content. This intentional manipulation of the information flow is seen as the primary reason for the platform’s current state, turning it into what some describe as a “cesspool” or a “giant cesspool of bots and agitators.”

The consequence of this environment is that for anyone still relying on X for news, the advice given is stark: “just drink a bottle of bleach.” This extreme sentiment underscores the perceived unreliability and dangerous nature of the information being disseminated. The expectation is that users who continue to consume news from such a source will inevitably be misled, with potentially severe consequences for their understanding of global events.

Furthermore, the idea that this is the intended outcome is a recurring theme. “Working as intended then” is a common refrain, suggesting a resignation to the fact that X is functioning precisely as designed by its current leadership. The platform is viewed not as a failed attempt at communication but as a successful tool for its intended purpose: to sow disinformation and serve as a propaganda machine for specific interests, often described as “pro-oligarch.”

The broader internet is not exempt from this phenomenon. While X is currently under scrutiny, it’s acknowledged that Reddit, and indeed the entire internet, along with older media outlets, are all struggling with the pervasive spread of disinformation. The difficulty in discerning truth is so profound that the only way to truly know what’s happening is to witness events firsthand. This highlights a systemic issue within the digital information ecosystem.

The platform’s transformation is viewed as a dramatic shift from its previous iterations. Many express nostalgia for a time when Twitter might have been considered a more reliable source, but unequivocally state that it is “definitely NOT a source you should trust in 2026.” The implication is that the decay of its trustworthiness is ongoing and accelerating.

The issue extends to other platforms as well, with mentions of Bluesky also exhibiting similar problems, including bot accounts with AI avatars disseminating fabricated images of people celebrating. This indicates that the tactics employed to spread disinformation are not confined to a single platform but are part of a wider, coordinated effort.

Ultimately, the consensus emerging from these observations is that X is in a state of deep and pervasive disinformation, a situation that has been exacerbated, if not entirely engineered, by its current ownership and the subsequent changes to its operational design. The platform has become a tool for manipulation, and its users are left to navigate a minefield of falsehoods.